Obesity is a global health problem. It is obiviously linked to diet in some way, but the exact nature of this link is the subject of volumes of research, and also of heated exchanges online. One school of thought, occasionally exhibiting quasi-religious tendencies in some of its advocates, claims that the obesity epidemic is mainly caused by our diet being too rich in carbohydrates from cereals and other such sources, as well as vegetable oils. As a solution, they advocate changing to a diet rich in animal fats, meat, eggs and so on.

Inspired by the coverage of a recent piece in The Lancet about rising obesity rates, as well as a somewhat uncritical book review in The Economist I decided to see myself if the publicly available data on obesity and diets could be tortured into confessing something on the issue.

I need to emphasize that this is not a scientific study. Describing the methods used as rigorous would be a stretch, to say the least. A few potential problems with the data and with my handling of it are outlined in the end of this article, and the list is by no means exhaustive. What this is, is a bit of light-weight data journalism that will hopefully inspire discussion and possibly more serious research into the data.

I used this WHO data on obesity (the same used by the Lancet authors), combined with agricultural statistics from FAO to see if the number of overweight and obese people in a country was correlated with the intake of various foods.

To capture the effect of changing diets, I used the data from several different years within a single country as separate data points where historical data was available. If you disagree with this choice, you can switch the view to show only the most recent data.

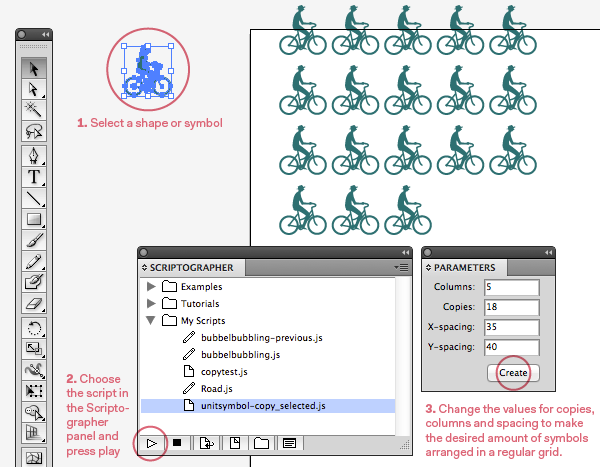

The end result is below, an interactive scatterplot that shows how the consumption of various foods correlates with the number of overweight and obese people in each country. The idea of the visual presentation is that the reader can look at the full dataset and not need to rely on single numbers such as averages or correlation coefficients.

In light of these numbers, there is no evidence that high cereal consumption is linked with obesity on a country level. If anything, the correlation between the share of overweight adults and cereal consumption is mildly negative (r = –0.18). With starchy roots (such as potato) there is no correlation whatsoever (r = 0.08).

The correlation between vegetable oils and overweight is moderate (r = 0.33), though not much greater than with the consumption of animal fats (r = 0.23). It should be noted, though, that the consumption of animal fats is very small in most non-Western countries, so not very many conclusions can necessarily be drawn from this comparison.

The strongest correlation in the data with the share of overweight adults is with meat consumption (r = 0.5). Not surprisingly, the correlation with sugar and sweeteners is also reasonably strong (r = 0.43).

The correlation with meat consumption and obesity is probably at least partially due to the fact that higher meat consumption is typical of higher living standards overall, which also often means a higher total calorie intake and less physical work. The existence of these types of confounding variables is amply demonstrated by the fact that the correlation of overweight with the consumption of fruits is also moderate (r = 0.32). Practically no one believes eating fruits makes you fat, so the explanation is probably that fruit intake is also simply correlated with higher living standards.

So what’s the take-home messge? I would interpret the data so that no single group of foods is responsible for the obesity epidemic by itself, certainly not cereals. This sort of population-level comparison using somewhat patchy data can hardly settle the matter by itself, but I would still argue that if cereals (and carbohydrates in general) were really so bad, there should be a sliver of the effect visible in the data even on this coarse level. Which there isn’t, as you can see.

The jury is still out on vegetable oils, but if we want to explain away the high correlation of meat consumption with the share of overweight adults, I would argue similar confounding factors are to be found here; the use of vegetable oils in the West has risen with the overall rise of living standards. So if you want to argue that the correlation of obesity with meat intake is spurious, the same should probably said of the clearly weaker correlation with vegetable oils – and vice versa.

The next step would be to compare the calorie intake from different kinds of foods instead of the absolute numbers (kg/capita/year), which could possibly help to overcome the fact that a rise in living standards affects both the total calorie intake and the mix of different types of foods consumed.

Potential sources of error

Apart the whole project being executed within the span of two working days, and by a designer with no scientific training to speak of, there are some specific details in the data and how it was processed that can be sources of error.

FAO’s data shows the “food supply”, that is, the food theoretically available for human consumption, not the actual food intake. Factors such as wastage are not taken into account, and may vary from country to country.

WHO’s data on obesity is collected using methods and samples differing from country to country and may thus not be directly comparable. There were some examples in the data where a change in the numbers was clearly an artifact of the data collection process, not representative of the change in the facts on the ground; for example the share of overweight people dropping from 59.8 to 46.2 percent in a single year in Australia 2000–2001. In such cases the most recent data was assumed to be reliable, and the older data was discarded.

The selection of countries for which the data is available is much better representative of high-income Western countries than world’s other regions, which is bound to effect the overall picture.

Because the number of years for which historical data was available varied greatly between different countries, not all years for which data was available were used. A more balanced subset was instead attempted by picking only some years, far apart enough to exhibit clear changes in dietary patterns. The method used is extremely arbitrary, and probably effects the end result.

The final dataset used for the visualization was created with a custom Python script from messy original data by a non-programmer, a process which is a highly probable source of error. The final data was superficially examined for flaws (and the script corrected several times accordingly), but it has not been rigorously and thoroughly scrutinized in the way required for e.g. scientific publication and thus scripting errors remain a potential source of errors in the data. For those interested in assessing the data quality themselves, the processed data can be downloaded as a tsv file (which is similar to csv, except using tabs instead commas as separators) here.